LLMs are Wrong for Mission Critical Tasks

- Charles Rathmann

- Apr 2

- 4 min read

Why Large Language Models (LLMs) Are a Miss For Industrial, Process, Engineering and Other Applications

Current focus on artificial intelligence (AI) in the media is on large language models (LLMs), largely because some are well-known consumer products.

The freemium model gives products like ChatGPT, Claude, Gemini, Perplexity AI, and others tremendous reach. They are designed as consumer products, and in enterprise use can be best seen as tools for human-computer interaction.

Large Language Models (LLMs) are increasingly integrated into mission-critical industrial and defense applications, but they are generally treated as glorified reference librarians—pulling pieces of information from large data sets or answering questions, rather than autonomous decision-makers. Even use in agentic AI—capable of stringing tasks together and executing independently—is not reliably in place in business as of early 2026. According to McKinsey, agentic AI has succeeded in only about 11% of settings it has been tried, and these are in well-governed processes.

LLMs meanwhile dominate consumer-facing AI applications because their excellent command of language mechanics make them highly usable. On the enterprise front, I’ve seen a rash of enterprise software and point solutions vendors add usually a ChatGPT or Microsoft Co-Pilot front-end to deliver this usability advantage and more importantly, provide the basis for AI messaging in the market.

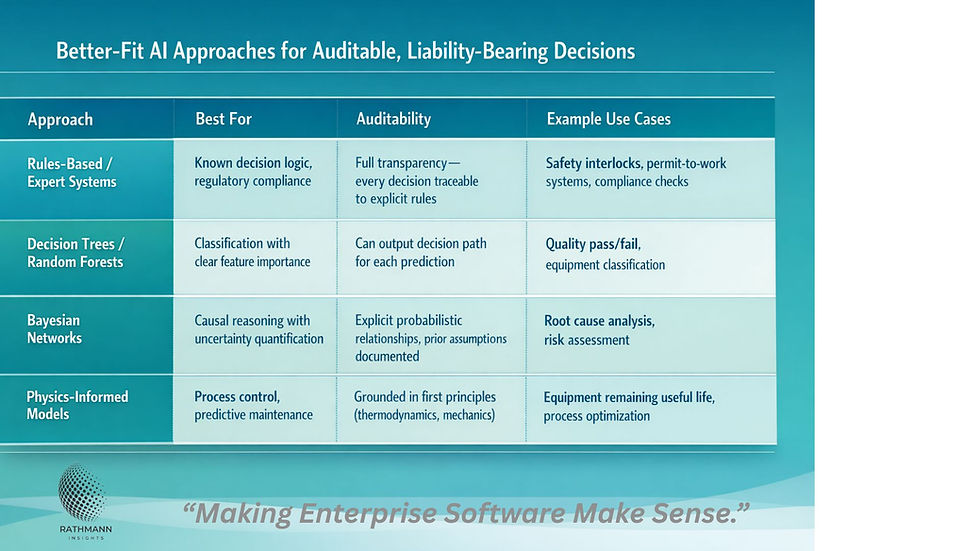

But once AI enters the operational realm, particularly in mission-critical realms like energy, automation or defense, LLMs’ downsides suggest other AI tools are more useful.

Why LLMs are Wrong for Industrial Use Cases

Non-Determinism

LLMs produce probabilistic outputs. This means the same input can yield different outputs. LLMs can also generate plausible-sounding but factually incorrect outputs, and because new responses are checked against past responses, these errors can magnify and compound over time.

This is not acceptable when you need reproducible, verifiable decisions in use cases like safety shutdowns, quality certifications, automated vehicles or weapons system deployment.

The industry standard for commercial and military airborne software--DO-178C, and MIL-STD-882, the U.S. Department of Defense standard for identifying, evaluating, and eliminating or mitigating risk throughout a system's life cycle, require bit-for-bit reproducibility for certification.

In industrial settings: a hallucinated maintenance recommendation could cause equipment damage or safety incidents.

In defense: incorrect threat classification or logistics data could be catastrophic, leading to loss of human life and destroying trust in a military force or governing body.

Explainable AI

Even when the output of an LLM is not in and of itself problematic, decisions AI makes in an industrial or defense setting must be explainable. This is critical when things go wrong because an audit will need to assign root cause and liability for a failure. Was the software itself not performing adequately, or

LLMs are "black boxes"—they cannot provide causal reasoning chains

Regulators, auditors, and courts require clear decision logic

"The AI said so" is not a valid defense in product liability, environmental compliance, or securities cases

The Need for Speed

Real-time processes like industrial controls, defense, dynamic scheduling, must be faster than an LLM. Part of the barrier here is processing speed—the other is the location of the AI. Cloud or even, in the case of a private instance of an LLM, a local server, is too slow. Processing speed for an LLM to complete complex reasoning can take seconds, while the same process in a purpose-based edge AI can take microseconds or milliseconds. This is on top of about 50x greater latency for a cloud-based LLM.

(LLMs) are also good at consuming capital--LLMs consume an order of magnitude more compute power, and require more expensive and powerful servers--than traditional methods of AI.

What Are LLMs Good For?

LLMs are good at some of the things humans are good at--proceeding in uncertainty, interfacing with humans in a consumer-grade environment and working with unstructured data. As the name suggests, language mechanics and human interaction are core strengths, but like humans, they tend to make stuff up to fill in gaps in their knowledge. These hallucinations, the equivalent of human bullsh*t, make them unsuitable for mission-critical work.

But can they replace humans in the workplace? In some cases, maybe. In others, maybe not--or if they can, not anytime soon given current in-the-trenches realities with agentic AI.

They are also good at consuming capital--LLMs consume an order of magnitude more compute power, and require more expensive and powerful servers--than traditional methods of AI. The data centers themselves, the power generation capability they require, all consume capital that is now often being pulled out of software development and human resources budgets.

This in turn creates an environment where capital is either being invested to reap a massive return as LLM data centers in hopes they find appropriate use cases with a payoff that merits their total cost, while empowering capital and degrading worker power, or the air inside of a bubble that will burst, leaving capital stranded and our economy hobbled as AI is being positioned to replace people in disciplines like teaching, sales, software development and creative disciplines.

Bottom line--LLMs are a poor fit for most industrial and mission-critical use cases. But like petroleum, which delivers a higher return on invested capital than renewables, we are probably seeing an entire economy shifting to accommodate capital investment in chip manufacturing, data centers and simulated humanity.

Perhaps. But regardless, mission-critical AI use cases should not be affected as long as the rush to LLMs does not infiltrate areas where only deterministic, auditable, reliable AI is appropriate.

Comments